Extra! Extra! Read all about it! As newspaper vendors used to shout. The Pint of Science Festival is happening across the UK in the week beginning Monday 18th May for three evenings in venues in 43 locations. I am talking on the first evening, May 18th, in Lime Street Social (51 Lime Street, L1 1JQ) on ‘I Sell Here, Sir, What all World Desires to have – POWER’. My title is a quote from Matthew Boulton, who with James Watt, set up a factory in Birmingham to produce steam engines in the 18th century. I am going to talk about producing nuclear power units in a factory (see ‘Commoditization of civil nuclear power’ on June 5th, 2024). If you would like to come to the event and hear three other speakers besides me and have a pint or two then please register at https://pintofscience.co.uk/events/liverpool/.

Extra! Extra! Read all about it! As newspaper vendors used to shout. The Pint of Science Festival is happening across the UK in the week beginning Monday 18th May for three evenings in venues in 43 locations. I am talking on the first evening, May 18th, in Lime Street Social (51 Lime Street, L1 1JQ) on ‘I Sell Here, Sir, What all World Desires to have – POWER’. My title is a quote from Matthew Boulton, who with James Watt, set up a factory in Birmingham to produce steam engines in the 18th century. I am going to talk about producing nuclear power units in a factory (see ‘Commoditization of civil nuclear power’ on June 5th, 2024). If you would like to come to the event and hear three other speakers besides me and have a pint or two then please register at https://pintofscience.co.uk/events/liverpool/.

Category Archives: MyResearch

Perched blocks and muskoxen

Greenland has been in the news recently and as a consequence more people know about it than when I visited there about 45 years ago (see ‘Ice bores and what they can tell us‘ on January 12th, 2022). I was part of a small expedition that spent a short Arctic summer on the Bersaekerbrae glacier in North East Greenland. We air-freighted our equipment from Glasgow to Reykjavík in Iceland where we charted an aircraft to fly us, our equipment and supplies to Mestersvik, in Scoresby Land, Greenland. Mestersvik was a couple of huts and a runway on the edge of Davy Sound where, by chance, there was a helicopter. I cannot remember why the helicopter was there; however, we persuaded the pilot to lift our supplies and equipment to our basecamp on the glacier which saved us back-packing everything in several day-long treks. We camped on the edge of the glacier while we undertook a series of scientific studies. Amongst other things, we counted muskoxen and measured how structures either sunk into the glacier ice or ended up perched on towers of ice (perched blocks), depending on the relative rate of melting of the ice around and beneath them. These two studies generated my first published research papers – I narrowly missed becoming a zoologist or glaciologist! While there has been only very limited exploitation of Greenland’s natural resources, the ecology of Greenland is being altered massively by the exploitation of natural resources elsewhere. Climate change caused by carbon emissions has led to the melting of the Greenland ice sheet, which between 1972 and 2023, lost on average 119 billion tonnes of ice per year, contributing a total of 17.3 mm to sea level rise, according to the EU’s Copernicus Programme.

Greenland has been in the news recently and as a consequence more people know about it than when I visited there about 45 years ago (see ‘Ice bores and what they can tell us‘ on January 12th, 2022). I was part of a small expedition that spent a short Arctic summer on the Bersaekerbrae glacier in North East Greenland. We air-freighted our equipment from Glasgow to Reykjavík in Iceland where we charted an aircraft to fly us, our equipment and supplies to Mestersvik, in Scoresby Land, Greenland. Mestersvik was a couple of huts and a runway on the edge of Davy Sound where, by chance, there was a helicopter. I cannot remember why the helicopter was there; however, we persuaded the pilot to lift our supplies and equipment to our basecamp on the glacier which saved us back-packing everything in several day-long treks. We camped on the edge of the glacier while we undertook a series of scientific studies. Amongst other things, we counted muskoxen and measured how structures either sunk into the glacier ice or ended up perched on towers of ice (perched blocks), depending on the relative rate of melting of the ice around and beneath them. These two studies generated my first published research papers – I narrowly missed becoming a zoologist or glaciologist! While there has been only very limited exploitation of Greenland’s natural resources, the ecology of Greenland is being altered massively by the exploitation of natural resources elsewhere. Climate change caused by carbon emissions has led to the melting of the Greenland ice sheet, which between 1972 and 2023, lost on average 119 billion tonnes of ice per year, contributing a total of 17.3 mm to sea level rise, according to the EU’s Copernicus Programme.

Research papers:

Patterson EA. Sightings of muskoxen in northern Scoresby Land, Greenland. Arctic, 37(1):61-3. 1984.

Patterson EA. A mathematical model for perched block formation. J. Glaciology, 30(106):296-301, 1984.

Passive nanorheology measurements

What do marshmallows, jelly (or Jell-O), cream cheese and Chinese soup dumplings have in common? They are often made with gelatin. Gelatin is derived from the skin and bones of cattle and pigs through the partial hydrolysis of collagen. Gelatin is a physical hydrogel meaning that it consists of a three-dimensional network of polymer molecules in which a large amount of water is absorbed, as much as 90% in gelatin. These polymer molecules are cross-linked by hydrogen bonds, hydrophobic interactions and chain entanglements. External stimuli, such as temperature, can change the level of cross-linking causing the material to transition between its solid, liquid and gel states. This is why jelly sets in the fridge and melts when it’s heated up – the cross-links holding the molecules together break down. This type of responsive behaviour allows the properties of hydrogels to be controlled at the micro and sub-micron scale for a host of applications including tissue engineering, drug delivery, water treatment, wearable technologies, and supercapacitors. However, the design and manufacture of soft hydrogels can be challenging due to the lack of technology for measuring the local properties. Current quantitative techniques for measuring the properties of hydrogels usually focus on bulk properties and provide little data about local variations or real-time responses to external stimuli. My colleagues and I have used gold nanoparticles as probes in hydrogels to map the properties at the microscale of thermosensitive hydrogels undergoing real-time transition from the solid to gel phases [see ‘Passive nanorheological tool to characterise hydrogels’]. This is an extension, or perhaps more accurately an application, of our earlier work on tracking nanoparticles through the vitreous humour of the eye [see ‘Nanoparticle motion-through heterogeneous hydrogels’ on November 6th, 2024]. The novel technique, which yields passive nanorheological measurements, allows us to evaluate local viscosity, identify time-varying heterogeniety and monitor dynamic phase transitions at the micro through to nano scale. The significant challenges of other techniques, such as weak signals due to high water content and the dynamism of hydrogels, are overcome with a fast, inexpensive and user-friendly technology. Although, even with these advantages, you are unlikely to use it when you are making jelly or roasting marshmallows over the campfire; however, it is really useful for understanding the transport of drugs through biological hydrogels or designing manufacturing processes for artificial tissue.

What do marshmallows, jelly (or Jell-O), cream cheese and Chinese soup dumplings have in common? They are often made with gelatin. Gelatin is derived from the skin and bones of cattle and pigs through the partial hydrolysis of collagen. Gelatin is a physical hydrogel meaning that it consists of a three-dimensional network of polymer molecules in which a large amount of water is absorbed, as much as 90% in gelatin. These polymer molecules are cross-linked by hydrogen bonds, hydrophobic interactions and chain entanglements. External stimuli, such as temperature, can change the level of cross-linking causing the material to transition between its solid, liquid and gel states. This is why jelly sets in the fridge and melts when it’s heated up – the cross-links holding the molecules together break down. This type of responsive behaviour allows the properties of hydrogels to be controlled at the micro and sub-micron scale for a host of applications including tissue engineering, drug delivery, water treatment, wearable technologies, and supercapacitors. However, the design and manufacture of soft hydrogels can be challenging due to the lack of technology for measuring the local properties. Current quantitative techniques for measuring the properties of hydrogels usually focus on bulk properties and provide little data about local variations or real-time responses to external stimuli. My colleagues and I have used gold nanoparticles as probes in hydrogels to map the properties at the microscale of thermosensitive hydrogels undergoing real-time transition from the solid to gel phases [see ‘Passive nanorheological tool to characterise hydrogels’]. This is an extension, or perhaps more accurately an application, of our earlier work on tracking nanoparticles through the vitreous humour of the eye [see ‘Nanoparticle motion-through heterogeneous hydrogels’ on November 6th, 2024]. The novel technique, which yields passive nanorheological measurements, allows us to evaluate local viscosity, identify time-varying heterogeniety and monitor dynamic phase transitions at the micro through to nano scale. The significant challenges of other techniques, such as weak signals due to high water content and the dynamism of hydrogels, are overcome with a fast, inexpensive and user-friendly technology. Although, even with these advantages, you are unlikely to use it when you are making jelly or roasting marshmallows over the campfire; however, it is really useful for understanding the transport of drugs through biological hydrogels or designing manufacturing processes for artificial tissue.

Reference

Moira Lorenzo Lopez, Victoria R. Kearns, Eann A. Patterson & Judith M. Curran, Passive nanorheological tool to characterise hydrogels, Nanoscale, 2025,17, 15338-15347.

Image: Figure 5 from the above reference showing a hydrogel transitioning to a gel phase as result of an increase in temperature with 100 nm diameter gold nanoparticles with some particles (yellow arrows) at the interface between phases. The image was taken in an inverted optical microscope set up for tracking the nanoparticles.

Star sequence minimises distortion

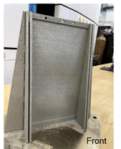

It is some months since I have written about engineering so this post is focussed on some mechanical engineering. The advent of pneumatic and electric torque wrenches has made it impossible for the ordinary motorist to change a wheel because it is very difficult to loosen wheel nuts by hand when they have been tightened by a powered wrench which most of us do not have available. This has probably made motoring safer but also means we are more likely to need assistance when we have a flat tire. It also means that the correct tightening pattern for nuts and bolts is less widely known. A star-shaped sequence is optimum, i.e., if you have six bolts numbered sequentially around a circle then you start with #1, move across the diameter to #4, then to #2 followed by #5 across the diameter, then to #3 and across the diameter to #6. This sequence is optimum for flanges, bolted joints in the frames of buildings and joining machine parts as well as wheel nuts. We have recently discovered that it works in reverse, in the sense that it is the optimum sequence for releasing parts made by additive manufacturing (AM) from the baseplate of the AM machine (see ‘If you don’t succeed try and try again’ on September 29th, 2021). Additive manufacturing induces large residual stresses as a consequence of the cycles of heat input to the part during manufacturing and some of these stresses are released when it is removed from the baseplate of the AM machine, which causes distortion of the part. Together with a number of collaborators, I have been researching the most effective method of building thin flat plates using additive manufacturing (see ‘On flatness and roughness’ on January 19th, 2022). We have found that building the plate vertically layer-by-layer works well when the plate is supported by buttresses on its edges. We have used two in-plane buttresses and four out-of-plane buttresses, as shown in the photograph, to achieve parts that have comparable flatness to those made using traditional methods. It turns out that optimum order for the removal of the buttresses is the same star sequence used for tightening bolts and it substantially reduces distortion of the plate compared to some other sequences. Perhaps in retrospect, we should not be surprised by this result; however, hindsight is a wonderful thing.

It is some months since I have written about engineering so this post is focussed on some mechanical engineering. The advent of pneumatic and electric torque wrenches has made it impossible for the ordinary motorist to change a wheel because it is very difficult to loosen wheel nuts by hand when they have been tightened by a powered wrench which most of us do not have available. This has probably made motoring safer but also means we are more likely to need assistance when we have a flat tire. It also means that the correct tightening pattern for nuts and bolts is less widely known. A star-shaped sequence is optimum, i.e., if you have six bolts numbered sequentially around a circle then you start with #1, move across the diameter to #4, then to #2 followed by #5 across the diameter, then to #3 and across the diameter to #6. This sequence is optimum for flanges, bolted joints in the frames of buildings and joining machine parts as well as wheel nuts. We have recently discovered that it works in reverse, in the sense that it is the optimum sequence for releasing parts made by additive manufacturing (AM) from the baseplate of the AM machine (see ‘If you don’t succeed try and try again’ on September 29th, 2021). Additive manufacturing induces large residual stresses as a consequence of the cycles of heat input to the part during manufacturing and some of these stresses are released when it is removed from the baseplate of the AM machine, which causes distortion of the part. Together with a number of collaborators, I have been researching the most effective method of building thin flat plates using additive manufacturing (see ‘On flatness and roughness’ on January 19th, 2022). We have found that building the plate vertically layer-by-layer works well when the plate is supported by buttresses on its edges. We have used two in-plane buttresses and four out-of-plane buttresses, as shown in the photograph, to achieve parts that have comparable flatness to those made using traditional methods. It turns out that optimum order for the removal of the buttresses is the same star sequence used for tightening bolts and it substantially reduces distortion of the plate compared to some other sequences. Perhaps in retrospect, we should not be surprised by this result; however, hindsight is a wonderful thing.

The current research is funded jointly by the National Science Foundation (NSF) in the USA and the Engineering and Physical Sciences Research Council (EPSRC) in the UK and the project was described in ‘Slow start to an exciting new project on thermoacoustic response of AM metals’ on September 9th 2020.

Image: Photograph of a geometrically-reinforced thin plate (230 x 130 x 1.2 mm) built vertically layer-by-layer using the laser powder bed fusion process on a baseplate (shown removed from the AM machine) with the supporting buttresses in place.

Sources:

Patterson EA, Lambros J, Magana-Carranza R, Sutcliffe CJ. Residual stress effects during additive manufacturing of reinforced thin nickel–chromium plates. IJ Advanced Manufacturing Technology;123(5):1845-57, 2022.

Khanbolouki P, Magana-Carranza R, Sutcliffe C, Patterson E, Lambros J. In situ measurements and simulation of residual stresses and deformations in additively manufactured thin plates. IJ Advanced Manufacturing Technology; 132(7):4055-68, 2024.